Update README.md

Browse files

README.md

CHANGED

|

@@ -64,19 +64,147 @@ image = pipe(prompt=prompt, num_inference_steps=4, guidance_scale=0).images[0]

|

|

| 64 |

|

| 65 |

### Image-to-Image

|

| 66 |

|

| 67 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 68 |

|

| 69 |

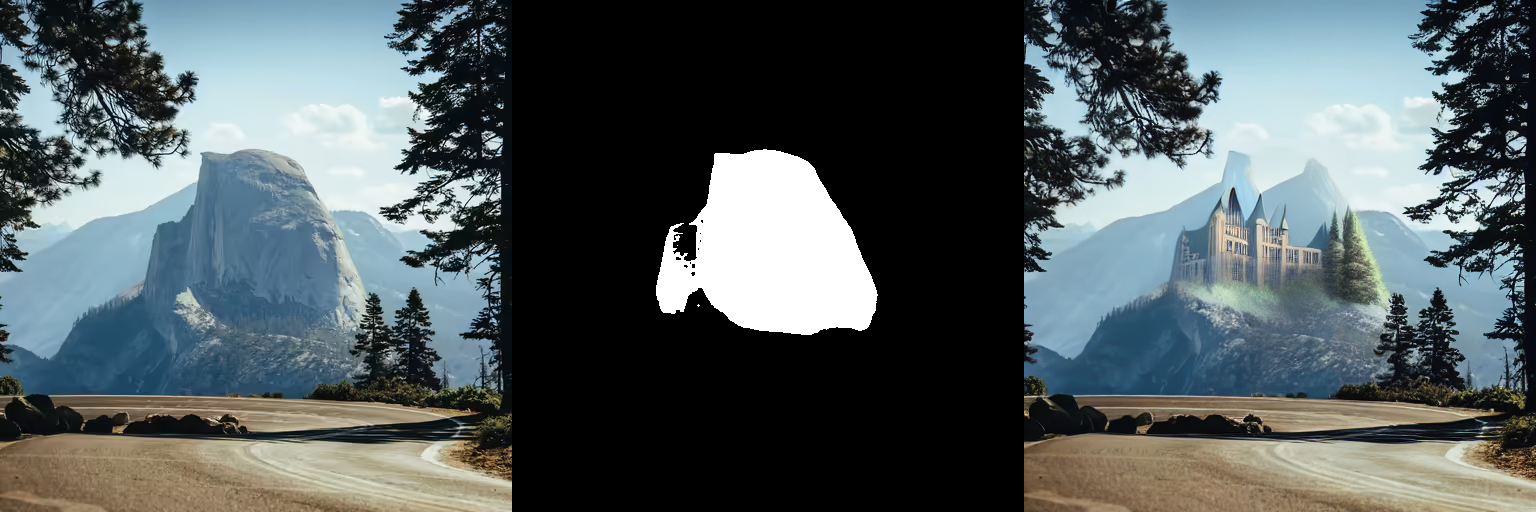

### Inpainting

|

| 70 |

|

| 71 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 72 |

|

| 73 |

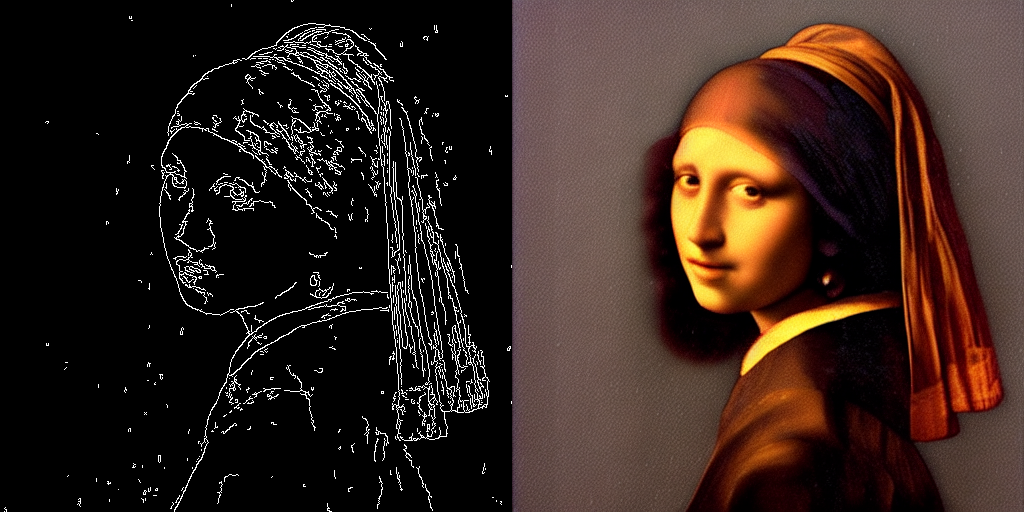

### ControlNet

|

| 74 |

|

| 75 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 76 |

|

| 77 |

-

|

| 78 |

|

| 79 |

-

Works as well! TODO docs

|

| 80 |

|

| 81 |

## Speed Benchmark

|

| 82 |

|

|

|

|

| 64 |

|

| 65 |

### Image-to-Image

|

| 66 |

|

| 67 |

+

LCM-LoRA can be applied to image-to-image tasks too. Let's look at how we can perform image-to-image generation with LCMs. For this example we'll use the [dreamshaper-7](https://huggingface.co/Lykon/dreamshaper-7) model and the LCM-LoRA for `stable-diffusion-v1-5 `.

|

| 68 |

+

|

| 69 |

+

```python

|

| 70 |

+

import torch

|

| 71 |

+

from diffusers import AutoPipelineForImage2Image, LCMScheduler

|

| 72 |

+

from diffusers.utils import make_image_grid, load_image

|

| 73 |

+

|

| 74 |

+

pipe = AutoPipelineForImage2Image.from_pretrained(

|

| 75 |

+

"Lykon/dreamshaper-7",

|

| 76 |

+

torch_dtype=torch.float16,

|

| 77 |

+

variant="fp16",

|

| 78 |

+

).to("cuda")

|

| 79 |

+

|

| 80 |

+

# set scheduler

|

| 81 |

+

pipe.scheduler = LCMScheduler.from_config(pipe.scheduler.config)

|

| 82 |

+

|

| 83 |

+

# load LCM-LoRA

|

| 84 |

+

pipe.load_lora_weights("latent-consistency/lcm-lora-sdv1-5")

|

| 85 |

+

pipe.fuse_lora()

|

| 86 |

+

|

| 87 |

+

# prepare image

|

| 88 |

+

url = "https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/img2img-init.png"

|

| 89 |

+

init_image = load_image(url)

|

| 90 |

+

prompt = "Astronauts in a jungle, cold color palette, muted colors, detailed, 8k"

|

| 91 |

+

|

| 92 |

+

# pass prompt and image to pipeline

|

| 93 |

+

generator = torch.manual_seed(0)

|

| 94 |

+

image = pipe(

|

| 95 |

+

prompt,

|

| 96 |

+

image=init_image,

|

| 97 |

+

num_inference_steps=4,

|

| 98 |

+

guidance_scale=1,

|

| 99 |

+

strength=0.6,

|

| 100 |

+

generator=generator

|

| 101 |

+

).images[0]

|

| 102 |

+

make_image_grid([init_image, image], rows=1, cols=2)

|

| 103 |

+

```

|

| 104 |

+

|

| 105 |

+

|

| 106 |

+

|

| 107 |

|

| 108 |

### Inpainting

|

| 109 |

|

| 110 |

+

LCM-LoRA can be used for inpainting as well.

|

| 111 |

+

|

| 112 |

+

```python

|

| 113 |

+

import torch

|

| 114 |

+

from diffusers import AutoPipelineForInpainting, LCMScheduler

|

| 115 |

+

from diffusers.utils import load_image, make_image_grid

|

| 116 |

+

|

| 117 |

+

pipe = AutoPipelineForInpainting.from_pretrained(

|

| 118 |

+

"runwayml/stable-diffusion-inpainting",

|

| 119 |

+

torch_dtype=torch.float16,

|

| 120 |

+

variant="fp16",

|

| 121 |

+

).to("cuda")

|

| 122 |

+

|

| 123 |

+

# set scheduler

|

| 124 |

+

pipe.scheduler = LCMScheduler.from_config(pipe.scheduler.config)

|

| 125 |

+

|

| 126 |

+

# load LCM-LoRA

|

| 127 |

+

pipe.load_lora_weights("latent-consistency/lcm-lora-sdv1-5")

|

| 128 |

+

pipe.fuse_lora()

|

| 129 |

+

|

| 130 |

+

# load base and mask image

|

| 131 |

+

init_image = load_image("https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/inpaint.png")

|

| 132 |

+

mask_image = load_image("https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/inpaint_mask.png")

|

| 133 |

+

|

| 134 |

+

# generator = torch.Generator("cuda").manual_seed(92)

|

| 135 |

+

prompt = "concept art digital painting of an elven castle, inspired by lord of the rings, highly detailed, 8k"

|

| 136 |

+

generator = torch.manual_seed(0)

|

| 137 |

+

image = pipe(

|

| 138 |

+

prompt=prompt,

|

| 139 |

+

image=init_image,

|

| 140 |

+

mask_image=mask_image,

|

| 141 |

+

generator=generator,

|

| 142 |

+

num_inference_steps=4,

|

| 143 |

+

guidance_scale=4,

|

| 144 |

+

).images[0]

|

| 145 |

+

make_image_grid([init_image, mask_image, image], rows=1, cols=3)

|

| 146 |

+

```

|

| 147 |

+

|

| 148 |

+

|

| 149 |

+

|

| 150 |

|

| 151 |

### ControlNet

|

| 152 |

|

| 153 |

+

For this example, we'll use the SD-v1-5 model and the LCM-LoRA for SD-v1-5 with canny ControlNet.

|

| 154 |

+

|

| 155 |

+

```python

|

| 156 |

+

import torch

|

| 157 |

+

import cv2

|

| 158 |

+

import numpy as np

|

| 159 |

+

from PIL import Image

|

| 160 |

+

|

| 161 |

+

from diffusers import StableDiffusionControlNetPipeline, ControlNetModel, LCMScheduler

|

| 162 |

+

from diffusers.utils import load_image

|

| 163 |

+

|

| 164 |

+

image = load_image(

|

| 165 |

+

"https://hf.co/datasets/huggingface/documentation-images/resolve/main/diffusers/input_image_vermeer.png"

|

| 166 |

+

).resize((512, 512))

|

| 167 |

+

|

| 168 |

+

image = np.array(image)

|

| 169 |

+

|

| 170 |

+

low_threshold = 100

|

| 171 |

+

high_threshold = 200

|

| 172 |

+

|

| 173 |

+

image = cv2.Canny(image, low_threshold, high_threshold)

|

| 174 |

+

image = image[:, :, None]

|

| 175 |

+

image = np.concatenate([image, image, image], axis=2)

|

| 176 |

+

canny_image = Image.fromarray(image)

|

| 177 |

+

|

| 178 |

+

controlnet = ControlNetModel.from_pretrained("lllyasviel/sd-controlnet-canny", torch_dtype=torch.float16)

|

| 179 |

+

pipe = StableDiffusionControlNetPipeline.from_pretrained(

|

| 180 |

+

"runwayml/stable-diffusion-v1-5",

|

| 181 |

+

controlnet=controlnet,

|

| 182 |

+

torch_dtype=torch.float16,

|

| 183 |

+

safety_checker=None,

|

| 184 |

+

variant="fp16"

|

| 185 |

+

).to("cuda")

|

| 186 |

+

|

| 187 |

+

# set scheduler

|

| 188 |

+

pipe.scheduler = LCMScheduler.from_config(pipe.scheduler.config)

|

| 189 |

+

|

| 190 |

+

# load LCM-LoRA

|

| 191 |

+

pipe.load_lora_weights("latent-consistency/lcm-lora-sdv1-5")

|

| 192 |

+

|

| 193 |

+

generator = torch.manual_seed(0)

|

| 194 |

+

image = pipe(

|

| 195 |

+

"the mona lisa",

|

| 196 |

+

image=canny_image,

|

| 197 |

+

num_inference_steps=4,

|

| 198 |

+

guidance_scale=1.5,

|

| 199 |

+

controlnet_conditioning_scale=0.8,

|

| 200 |

+

cross_attention_kwargs={"scale": 1},

|

| 201 |

+

generator=generator,

|

| 202 |

+

).images[0]

|

| 203 |

+

make_image_grid([canny_image, image], rows=1, cols=2)

|

| 204 |

+

```

|

| 205 |

|

| 206 |

+

|

| 207 |

|

|

|

|

| 208 |

|

| 209 |

## Speed Benchmark

|

| 210 |

|